The Emotion Machine: Commonsense Thinking, Artificial Intelligence, and the Future of the Human Mind

Marvin Lee Minsky, Ph.D.

Page 3 of 4

If you get above a few thousand rules then you start to get conflicts where too many rules apply to a situation and then you need judicial procedures and nobody has really debugged a rule-based system that promotes any kind of legal argument.

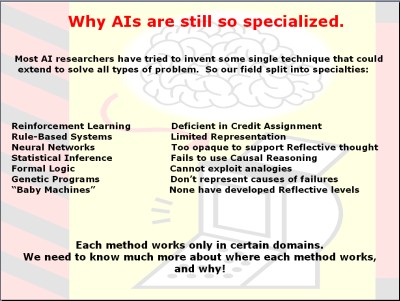

Statistics is the dominant thing right now. Most researchers try to collect experience and just summarize in terms of conditional probabilities. That is great except if something new happens it won't help you. Neuro networks you know about and formal logic is popular. Here you have seven different approaches. In most of the AI communities, most researchers work on one or the other because they think that is the answer. Hawkins [1] proposed sort of a hierarchy learning system that might be slightly different from some of these. However, again, it is just sort of a uniform way to do everything and that is not how people work. Each of these methods is good for some problems but not for others. Here is the trouble: We are in a community that used to be supported by the government and by philanthropy; now it is supported by investors. If your system does not work, you are not going to advertise it.

The thing we most desperately need to know is what kind of problems neuro networks can solve and what kinds they cannot solve. What kinds of problems can be solved by logic and which cannot? The logic cannot reason by analogies, for example. What kinds of processes can evolve by genetics and which need intelligent creators to help? The simple answer to that one is genetics can only build creatures that know how to solve relatively small numbers of very serious problems because you have just so many genes.

A child knows ten million things. When you tell children fairy tales they learn tens of thousands of bad things that happen to people. Genetics cannot do that. Genetics can learn to avoid a situation where you have an even .1 percent better chance of survival if you react in a certain way. But that means you can only learn a few thousands things.

What genetics cannot do is accumulate a huge knowledge basis. It could if you had four billion nucleotides in your genome. If that were a real database, you would not have to go to school. Genetics remembers why your ancestors survived but it keeps no record of why almost everyone else died. It is an interesting fact that you do not see.

Of course, you do not need an intelligent creator, you need a society that develops memes [2] and words. Humans take a long time to mature. A good thing that evolution did was to handicap babies so that they could not escape from their parents, that they were dependent and had to hang around and learn their memes. It was not just for getting food.

What is the answer? The answer is to make a smart machine you have to give it different ways to think, not just one. I think it is no accident that we have hundreds of different brain centers rather than one really elegant one. Neuro scientists do not know what they do yet, so it is a big gap between knowing how neurons work and knowing how higher level self-reflective thinking works.

Even if you knew exactly how neurons work, you would not be very far along. We know exactly why the arsenic and phosphorous in a transistor makes the transistor into an amplifier. We know exactly why computers do not depend on how the transistor works, because if you stick two transistors together out of phase then you get a flip-flop, which is either on or off, and the properties of the individual transistors do not matter.

What I think is important in the brain is that most of the brain is made of these cortical columns, each of which has a few hundred or a thousand cells. I think these are what insulate the higher levels of thinking from the kind of learning that a jellyfish or a sea anemone can do, that is the cortical columns are to neurons, as the flip-flops are to the transistor registers. There is a lack of communication between some of us AI people and the neuroscientists because they say, well, the chemicals are important and we say they are not. This conflict will be resolved by the next generation of students, it won't be resolved by the people working today.

My conclusion is that you want the machine to use logic sometimes, neuronet sometimes, statistics sometimes, and genetic algorithm sometimes. The best way to learn is to listen to other people, build common sense databases, and so forth. At different moments, our brains cleverly switch to using one method or another and that is why we are so smart.

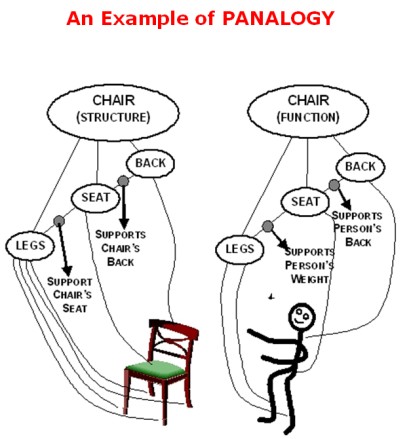

When you learn something, you represent it in several different ways. What is a chair? From one point of view, you might be interested in its structure. A chair is legs, seat and back arranged so that the legs support the seat, and the seat supports the back. Another way to look at the chair is to represent it as a device for making people comfortable by keeping your weight off your legs and thighs and supporting your back etc. Another way to represent a chair is that it is an article of commerce and the chair might cost $20, or $20,000 if it's an antique, and so forth.

All normal children learn to represent them in maybe ten different ways, and that is another thing that we have not yet seen in computer programs but it is bound to come. Various chapters of my book discuss this idea I call panalogy (parallel analogy). If you understand something in just one way, you are likely to get stuck because if it doesn't work then you screech to a halt. However, if you have five or six ways to represent each new thing that you learn, then when one interpretation does not work in a tenth of a second or less you can switch to thinking of it in another way. I imagine that some of the brain rhythms are simply systems that allow you to switch between five or six different viewpoints several times a second and so forth.

Let me summarize this all by saying; what is the critical thing here now? Suppose that we agree that what makes people smart and resourceful and more flexible than all other animals is that whenever you get stuck thinking in one way you can switch to another very quickly, in less than a second. Well, but how do you know when to switch, how do you recognize when you get stuck, and what do you switch to? The central thread of The Emotion Machine is suggesting a very interesting high-level architecture that is almost the same as the idiotic one that Pavlov and Watson [3] suggested in 1900 or in the early days of behaviorism. Mainly we are rule-based systems. If a certain kind of thing happens, we react to it in a certain way, but at the lowest level, if something is pinching you, you move your arm. That is even built into your spinal cord so you do not have to learn it.

At the higher level, you could say, well, I have been thinking about politics from an economic view, maybe I should think about it from a sociological view. Something recognizes that you are stuck, it recognizes the way in which you are stuck and you switch to another way to think. The most important high-level thing is the reflective part of your brain that recognizes the kind of stuck-ness you are in. In order words, you are not reacting to the situation, to the world the highest parts of your brain are reacting to what they see in the next lower levels and they say, well, if a problem seems familiar and I can't solve it, try reasoning by analogy; does it remind me of another problem I did solve? If it seems unfamiliar, change the way you described it. If a problem still seems too hard, find a way to split it into two or more parts. If it is too complicated just simplify it. Ignore most of it and solve the simplified problem, and then if you are lucky you will be able to fit the rest. What is the best one? If none of those work, ask someone for help.

The baby is the universal machine because whenever it is uncomfortable it makes a loud noise, and adults are programmed to find this irresistible. You cannot ignore the baby's cry, you either help it or kill it, and in rare cases it's the latter. Mostly, how long can you not attend to a baby, 30 seconds?

Footnotes

1. Jeff Hawkins (born June 1, 1957 in Huntington, New York) is the founder of Palm Computing (where he invented the Palm Pilot) and Handspring (where he invented the Treo). He has since turned to work on neuroscience full-time and has founded the Redwood Neuroscience Institute and published On Intelligence describing his memory-prediction framework theory of the brain. In 2003 he was elected as a member of the National Academy of Engineering "for the creation of the hand-held computing paradigm and the creation of the first commercially successful example of a hand-held computing device."

http://en.wikipedia.org/wiki/Jeff_Hawkins July 15, 2008 4:12PM EST

2. Memes – the idea of a “meme” a package of information passed from one mind to another – was developed in Richard Dawkins, The Selfish Gene (New York: Oxford University Press, 1989). See also Susan Blackmore, The Meme Machine (New York: Oxford University Press, 1999), and Daniel C. Dennett, Darwin’s Dangerous Idea (New York: Simon and Schuster, 1995).

3. John Broadus Watson (January 9, 1878–September 25, 1958) was an American psychologist who established the psychological school of behaviorism, after doing research on animal behavior. He is known for having claimed that he could take any twelve healthy infants and, by applying behavioral techniques, create whatever kind of person he desired.

http://en.wikipedia.org/wiki/John_B._Watson July 15, 2008 4:39PM EST

1 2 3 4 next page>